The Data Leader’s Journey: From Gigabytes to Petabytes

Source: Äri-IT Kevad 2025

Author: Dmitri Oništšik, Data Architect

How to solve reporting platform scaling issues when business growth outpaces technological capabilities?

Imagine this: your company is experiencing rapid growth – order volumes have skyrocketed from thousands to hundreds of thousands. Your reporting platform is under immense pressure, and it’s your job to fix it. Seems simple enough, right? Just scale up, add more power and resources, and problem solved. But it’s not always that straightforward.

Research shows that doubling team size rarely leads to a proportional increase in productivity. In fact, performance often takes a noticeable hit. Does this apply to data platforms too?

The Challenges of Growing Data Volumes

In the early 2000s, Silicon Valley giants like Yahoo, Amazon, Google, and Facebook, dealing with unprecedented data volumes, faced numerous data processing and management hurdles. The tools and technologies of the time couldn’t handle the ever-growing data. The internet and social media boom fueled this surge, with more people online creating more content by the minute.

The last decade has seen mobile devices and the Internet of Things further amplify this data explosion. On average, data volumes grow by over 20% annually. This growth not only exacerbates existing issues but also introduces unforeseen challenges.

Understanding Data Characteristics

”Big data” was a concept long before the 2000s, but it wasn’t a universal concern. Today, almost any business can encounter big data challenges. The ”three Vs” – volume, velocity, and variety – define big data. Volume refers to the sheer amount of data, velocity to processing speed, and variety to the range of data types. While all three are crucial, this article focuses on volume, as it demands significant adjustments to tools and processes.

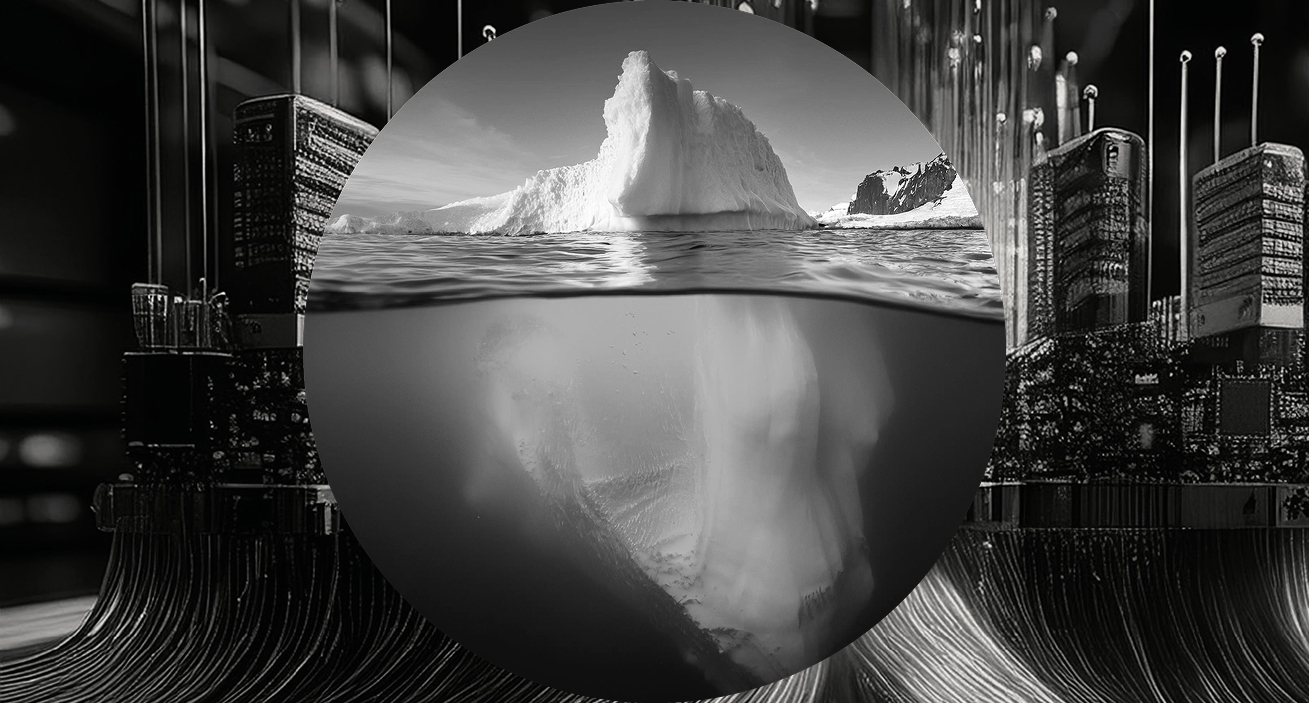

Most data-related problems stem from two factors: technological limitations and a lack of essential data governance processes. As volumes increase, current tools and architectures may falter, lacking horizontal scalability. Picture a single database server at 100% capacity, struggling to deliver reports on time. You’ve faced this before, so you upgrade the server’s memory. It helps, but only temporarily. This is vertical scaling – adding more resources to a single system. But you’ll hit a ceiling; you can’t infinitely add more powerful components.

Scaling Your Data Infrastructure

The solution? Distribute the workload across multiple, less expensive machines. This is horizontal scaling. In theory, you can add an unlimited number of these machines, handling any data volume. Modern data platforms are built on these scalability principles:

- Decoupled compute and storage: Scale storage independently of compute power, and vice versa.

- Parallel data processing: Break down tasks and process them simultaneously across multiple engines.

- Incremental data loads: Process only new data during ETL, not the entire dataset each time (common in older platforms).

As a data leader, you must understand that different scaling approaches require varying architectures, infrastructure, network topologies, and skill sets. Transitioning is a major undertaking, not a ”simple upgrade.” It’s a fundamental shift in mindset, involving new principles, tools, and technologies. Orchestration becomes paramount, and monitoring more complex, demanding careful planning. If budgets are tight, keep a close eye on costs. Don’t rely solely on calculators and vendor promises; it’s always pricier than anticipated due to system complexity. Beginners often underestimate costs, like network infrastructure, previously negligible with a single machine. With dozens of interconnected components, network expenses become significant.

Evolving Data Governance and Processes

Why do processes change? As data volume grows, so does its variety: new HR systems, GPS sensor data, and more. Initially, a few dozen tables were manageable by a small team. Now, hundreds of tables exist, with no one fully understanding the data. This is what happens without proper data governance.

It’s time to focus on metadata management, foster a data-driven culture, and educate stakeholders on its importance and necessity. Without it, the consequences can be severe. Organizations must recognize the need for more structure and formality.

You can’t afford to give every data analyst and engineer full admin privileges. You can’t rely on a single ”know-it-all” expert; the system’s too large and complex. Team collaboration, knowledge sharing, and defined responsibilities are crucial. Establish access control, define processes, and invest in disaster recovery and business continuity plans. Don’t expect your team to scale without guidance; it won’t happen. Small errors can escalate, causing significant business and reputational damage.

These issues are multifaceted, complex, and highly organization-specific. We’re all at different stages of our data literacy journey, so universal advice is hard to give. Hopefully, this article provides food for thought about your situation, resources, and organization. Have you started planning? Remember, the best time to start was yesterday, and the next best time is today.